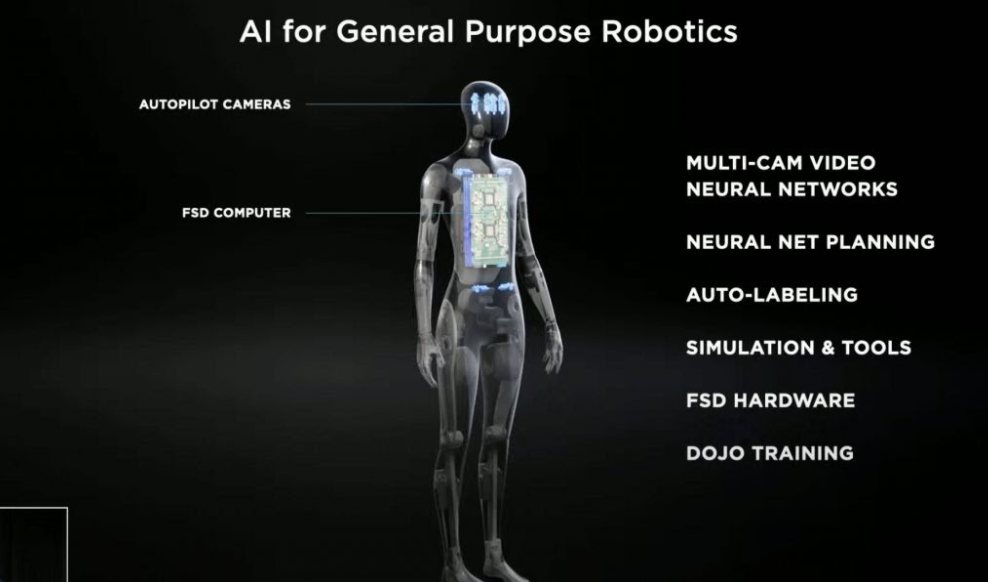

Elon Musk, previously warning that AI robots will take over, announced a humanoid Tesla Bot.

Following an impressive set of announcements during Tesla’s AI Day, the company had one more surprise to share with its fans.

The self-driving car company displayed a video of a humanoid Tesla Bot while also bringing a dancing man on stage. The man was dressed like a robot and did what can only be described as a Fortnite dance while techno music played in the background.

https://twitter.com/rmac18/status/1428708172856918018

The dancing man was the closest the company got to showcasing any prototypes of its Tesla Bot. CEO Elon Musk, notorious for overpromising, said that Tesla’s humanoid robot “doesn’t work”.

Why would Tesla build a humanoid bot?

Many questioned why a self-driving car company would build a human-shaped robot in the first place. Elon Musk didn’t seem to have an answer himself. Instead, the ambitious CEO appears to have taken a “why not” approach.

He also referred to Tesla as “arguably the world’s biggest robotics company” since their cars are “semi-sentient robots on wheels”.

While his statement is technically true, it doesn’t answer why Tesla wouldn’t focus its efforts on the self-driving car industry instead.

Many believe that the Tesla Bot announcement was merely a recruitment pitch to get AI engineers excited about joining the company.

Elon Musk is backtracking on robots

Elon Musk himself frequently warned of the dangers of artificially intelligent robots.

Among Musk’s portfolio is Neuralink, a company he co-founded to bring AI capabilities to humans using a surgically implanted brain chip. He said that such developments are necessary so that humans have a fighting chance against robots in the future.

In a speech a few years after co-founding Neuralink, Musk cautioned that “robots will be able to do everything better than us”, adding that “people should be really concerned by it.”

As such, it was no surprise that the cautionary CEO repeatedly assured his audience that the Tesla Bot would not put humanity in danger. At a maximum speed of 5 m/ph (8 km/h), Musk promised that the robot could be outrun and overpowered if necessary. To some awkward laughter, he then added that such a situation would hopefully not be required.

Can robots take over humanity?

While the Tesla Bot will come with limitations to ensure it cannot overpower humanity, it makes the fears of a robot takeover much more real.

Due to technological limitations or intentional decisions, the company’s robots will be weaker than we are. However, as the tech becomes more advanced, what’s to stop a company from building robots that we have no chance of fighting?

This is precisely what robotics company Boston Dynamics is doing. Earlier this month, it shared an impressive YouTube video of its humanoid robots doing parkour. The video has over 8 million views on YouTube. Another video with 33 million views shows its robots dancing to celebrate the new year.

Boston Dynamic’s robots are not commercially ready, though they can easily overpower a human. The company classifies its current robotics work as research and development.

Will militaries use robots to fight humans?

As robotic technology becomes more advanced, we have seen the introduction of droid soldiers in the field.

While Boston Dynamics distances itself from military uses, the company initially developed many robots for the US military. Its robots’ terms and conditions now forbid its use “to harm or intimidate any person or animal, as a weapon, or to enable any weapon”.

Yet that hasn’t stopped police forces and militaries from using Spot, a robot modelled as a dog, to assist in their operations.

Read more: Dystopian tech is already here and this is why you should worry

Earlier this year, École Spéciale Militaire de Saint-Cyr, a French military school, shared pictures of Spot being used alongside its trainee soldiers in military training. Soldiers reported that while the robot slowed them down, it also helped keep them safer.

However, the issue remains that such choices are made by the individual business and not some governmental regulation. In theory, Boston Dynamics could develop armed robotic soldiers for the US military if it chose to do so. That’s the decision taken by competitor Ghost Robotics.

The company has developed partnerships with US and Israeli military agencies, supplying them with robots that can assist soldiers on the ground. While such technology is marketed as a method to bring civilian casualty down, it’s easy to doubt the ethics of those military organisations after decades of oppression.

Most researchers have urged the use of “kill-switches” that can instantly disable robots if things go wrong. However, even such mechanisms leave the oppressed at the mercy of those deploying the robots.

It seems like the inevitable future will involve powerful military organisations deciding when to use such technology without anyone else getting a say in their regulation. As robotics become more mainstream, even terrorist organisations will likely get access to such weaponry.

There is perhaps some light amidst this terrifying darkness, though. Firstly, several nations worldwide already have nuclear weapons that can wipe out countries within seconds, though a mutual goal of human preservation has mostly avoided their usage.

Read more: Hundreds of Facebook employees mobilise to tackle Palestinian censorship

AI weaponry requires substantial human resources for its development, and it seems like many people are against their work being used in such a context. As technology gets more powerful, we’ll need to depend on the goodwill of humanity to keep us away from such bleak futures.

Do you think a war against robots is inevitable? Or will we introduce regulations to prevent such a future? Let us know in the comments.

Follow Doha News on Twitter, Instagram, Facebook and Youtube